Well. There is a good way to install the nvidia driver correctly and the avoid problems later. Here is a great howto, step-by-step, easy-to-use.

But let me correct it out, regarding the 10.04 release !

First of all (before steps), download the "dkms" pack from the bottom of the post on the linked page, and the nvidia driver from nvidia.com into your home directory.

Step1, remove the drivers. Fix the "180" to "190" or "195" , don't sure how Ubuntu calls it at the minutre.

At step 2, edit /etc/blacklist.d/blacklist.conf . Add 2 new entries to the end:

blacklist nv

blacklist nouveau

Then do a reboot, at the boot menu, select recovery mode. Go with the "root mode with networking" (or what, its at the bottom, you will be able to identify it, don't worry about the instructions. :))

When it boots, type your root password. Then type: init 3 . Login again (yay).

Now, install the driver with sudo sh ./NV* . There will be an error about "distributor provided.." don't care about it, just agree, yes yes (more, grep, fsck :)).

After it finishes, do a sudo nvidia-xconfig . THEN, do the sudo sh ./installdkms* part. After it finishes, you are done, reboot.

Yeah I'm aware of the howto and how its 'harder' than the "install restricted modules". However, a lot of people noticed issues , anomalies with the default driver. This way you will get the NVidia binary driver, more recent than the one Ubuntu ships, and it won't be a problem during kernel upgrades. Also, you can upgrade the driver by hand whenever you want. If you get stuck, comment, ask. (Check which part seems to be hard , check if you can find that blacklist and such before you dive in.)

And yeah, after this, we'll continue with the CUDA stuff. :)

EDIT: Before you try the long guide and install everything again, you might solve the error "(DLL) initialization routine failed. Error loading caffe2_detectron_ops_gpu.dll" by downgrading from torch = 1.7.1 to torch=1.6.0, according to this (without having tested it).

This is a selection of guides that I used.

The solution here was drawn from many more steps, see this in combination with this. An overall start for cuda questions is on this related Super User question as well.

Here is the solution:

- Install cmake:

https://cmake.org/download/

Add to PATH environmental variable:

C:\Program Files\CMake\bin

- Install git, which includes mingw64 which also delivers curl: https://git-scm.com/download/win

Add to PATH environmental variable:

C:\Program Files\Git\cmd

C:\Program Files\Git\mingw64\bin for curl

- As the compiler, I chose

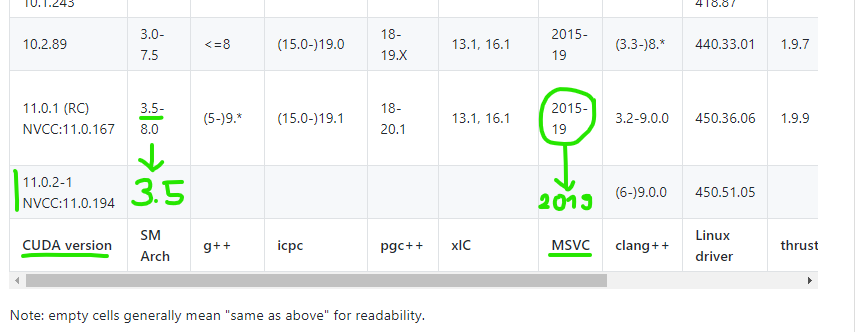

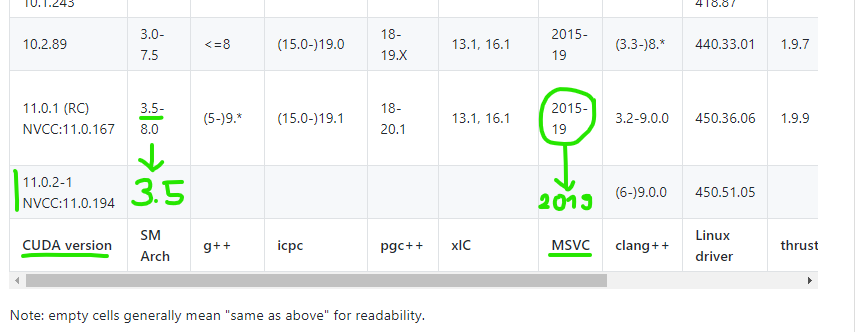

MSVC 2019 for the CUDA compiler driver NVCC:10.0.194 since that can handle CUDA cc 3.5 according to https://gist.github.com/ax3l/9489132. Of course, you will want to check your own current driver version.

Note that the green arrows shall tell you nothing else here than that the above cell is copied to an empty cell below, this is by design of the table and has nothing else to say here.

The green marks and notes are just the relevant version numbers (3.5 and 2019) in my case. Instead, what is relevant in your case is totally up to your case!

Running MS Visual Studio 2019 16.7.1 and choosing --> Indivudual components lets you install:

- most recent

MSVC v142 - VS 2019 C++-x64/x86-Buildtools (v14.27) (the most recent x64 version at that time)

- most recent

Windows 10 SDK (10.0.19041.0) (the most recent x64 version at that time).

As my graphic card's CUDA Capability Major/Minor version number is 3.5, I can install the latest possible cuda 11.0.2-1 available at this time. In your case, always look up a current version of the previous table again and find out the best possible cuda version of your CUDA cc. The cuda toolkit is available at https://developer.nvidia.com/cuda-downloads.

Change the PATH environmental variable:

SET PATH=C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.0\bin;%PATH%

SET PATH=C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.0\extras\CUPTI\lib64;%PATH%

SET PATH=C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.0\include;%PATH%

- Download cuDNN from https://developer.nvidia.com/cudnn-download-survey. You have to register to do this. Then install cuDNN by copying the latest cuDNN zip-extract to the following directory:

C:\Program Files\NVIDIA cuDNN

- Change the PATH environmental variable:

SET PATH=C:\Program Files\NVIDIA cuDNN\cuda;%PATH

- open anaconda prompt and at best create a new virtual environment for pytorch with a name of your choice, according to https://stackoverflow.com/questions/48174935/conda-creating-a-virtual-environment:

conda create -n myenv

- Install perhaps needed packages:

(myenv) C:\Users\Admin>conda install numpy ninja pyyaml mkl mkl-include setuptools cmake cffi typing_extensions future six requests

In anaconda or cmd prompt, clone pytorch into a directory of your choice. I am using my Downloads directory here: C:\Users\Admin\Downloads\Pytorch>git clone https://github.com/pytorch/pytorch

In anaconda or cmd prompt, recursively update the cloned directory: C:\Users\Admin\Downloads\Pytorch\pytorch>git submodule update --init --recursive

Since there is poor support for MSVC OpenMP in detectron, we need to build pytorch from source with MKL from source so Intel OpenMP will be used, according to this developer's comment and referring to https://pytorch.org/docs/stable/notes/windows.html#include-optional-components. So how to do this?

Install 7z from https://www.7-zip.de/download.html.

Add to PATH environmental variable:

C:\Program Files\7-Zip\

Now download the MKL source code (please check the most recent version in the link again):

curl https://s3.amazonaws.com/ossci-windows/mkl_2020.0.166.7z -k -O

7z x -aoa mkl_2020.0.166.7z -omkl

My chosen destination directory was C:\Users\Admin\mkl.

Also needed according to the link:

conda install -c defaults intel-openmp -f

- open anaconda prompt and activate your whatever called virtual environment:

activate myenv

- Change to your chosen pytorch source code directory.

(myenv) C:\WINDOWS\system32>cd C:\Users\Admin\Downloads\Pytorch\pytorch

- Now before starting cmake, we need to set a lot of variables.

As we use mkl as well, we need it as follows:

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>set “CMAKE_INCLUDE_PATH=C:\Users\Admin\Downloads\Pytorch\mkl\include”

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>set “LIB=C:\Users\Admin\Downloads\Pytorch\mkl\lib;%LIB%”

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>set USE_NINJA=OFF

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>set CMAKE_GENERATOR=Visual Studio 16 2019

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>set USE_MKLDNN=ON

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>set “CUDAHOSTCXX=C:\Program Files (x86)\Microsoft Visual Studio\2019\Community\VC\Tools\MSVC\14.27.29110\bin\Hostx64\x64\cl.exe”

(myenv) C:\Users\Admin\Downloads\Pytorch\pytorch>python setup.py install --cmake

Mind: Let this run through the night, the installer above took 9.5 hours and blocks the computer.

Important: Ninja can parallelize CUDA build tasks.

It might be possible that you can use ninja, which is to speed up the process according to https://pytorch.org/docs/stable/notes/windows.html#include-optional-components. In my case, the install did not succeed using ninja. You still may try: set CMAKE_GENERATOR=Ninja (of course after having installed it first with pip install ninja). You might also need set USE_NINJA=ON, and / or even better, try to leave out set USE_NINJA completely and use just set CMAKE_GENERATOR=Ninja (see Switch CMake Generator to Ninja), perhaps this will work for you. It is definitely possible to use ninja, see this comment of a successful ninja-based installation.

[I might also be wrong in expecting ninja to work by a pip install in my case. Perhaps we also need to get the source code of ninja instead, perhaps also using curl, as was done for MKL. Please comment or edit if you know more about it, thank you.]

In my case, this has run through using mkl and without using ninja.

Now a side-remark. If you are using spyder, mine at least was corrupted by the cuda install:

(myenv) C:\WINDOWS\system32>spyder

cffi_ext.c C:\Users\Admin\anaconda3\lib\site-packages\zmq\backend\cffi_pycache_cffi_ext.c(268): fatal error C1083: Datei (Include) kann nicht geöffnet werden: "zmq.h": No such file or directory Traceback (most recent call last): File "C:\Users\Admin\anaconda3\Scripts\spyder-script.py", line 6, in

from spyder.app.start import main File "C:\Users\Admin\anaconda3\lib\site-packages\spyder\app\start.py", line 22, in

import zmq File "C:\Users\Admin\anaconda3\lib\site-packages\zmq_init_.py", line 50, in

from zmq import backend File "C:\Users\Admin\anaconda3\lib\site-packages\zmq\backend_init_.py", line 40, in

reraise(*exc_info) File "C:\Users\Admin\anaconda3\lib\site-packages\zmq\utils\sixcerpt.py", line 34, in reraise

raise value File "C:\Users\Admin\anaconda3\lib\site-packages\zmq\backend_init_.py", line 27, in

ns = select_backend(first) File "C:\Users\Admin\anaconda3\lib\site-packages\zmq\backend\select.py", line 28, in select_backend

mod = import(name, fromlist=public_api) File "C:\Users\Admin\anaconda3\lib\site-packages\zmq\backend\cython_init.py", line 6, in

from . import (constants, error, message, context, ImportError: DLL load failed while importing error: Das angegebene Modul wurde nicht gefunden.

Installing spyder over the existing installation again:

(myenv) C:\WINDOWS\system32>conda install spyder

Opening spyder:

(myenv) C:\WINDOWS\system32>spyder

- Test your pytorch install.

I did it according to this:

import torch

torch.__version__

Out[3]: '1.8.0a0+2ab74a4'

torch.cuda.current_device()

Out[4]: 0

torch.cuda.device(0)

Out[5]: <torch.cuda.device at 0x24e6b98a400>

torch.cuda.device_count()

Out[6]: 1

torch.cuda.get_device_name(0)

Out[7]: 'GeForce GT 710'

torch.cuda.is_available()

Out[8]: True

Best Answer

Result in advance:

Cuda needs to be installed in addition to the display driver in various ways, Tensorflow needs the system install, Pytorch does not (unless you install it from source).

Mind that "CUDA Toolkit" (standalone) and cudatoolkit (conda) are different!

####

Details (only fyi):

Why not just testing an installation that needs cuda to find out. Going to https://pytorch.org/get-started/locally/, you get

conda install pytorch torchvision cudatoolkit=10.2 -c pytorchas the installation command in conda prompt. It chooses to install version 10.2. It would not install cuda if that came with the display driver.The installation then installs a cuda toolkit:

Then we see that the cudatoolkit-10.2.89 | 317.2 MB is probably too large to be plausibly included in the display driver. In

C:\Program Files (x86)\NVIDIA Corporation, there are only three cuda-named dll files of a few houndred KB.p.s.: The mentioned cuda 11.0 in the release notes is just giving us the support information, not the actual installation. I have had a look at the release notes as well. It lists cuda 11.0 under "Software Module Versions", yes. Yet later under "New Features and Other Changes" it just says "Supports CUDA 11.0.", see https://us.download.nvidia.com/Windows/451.67/451.67-win10-win8-win7-release-notes.pdf.

From https://stackoverflow.com/questions/9727688/how-to-get-the-cuda-version:

nvidia-smito get the version in the top right is rejected as wrong since it only shows which version is supported. It does not show if Cuda is actually installed. @BruceYo comments: [The command nvidia-smi] "will display CUDA Version even when no CUDA is installed."This suggests again that cuda is not included in the display driver installation.